vLLM Community Collaboration Newsletter

A roundup of community contributions and milestones from the vLLM ecosystem in February 2026.

Disclaimer: This newsletter is personal contribution notes from an individual builder's perspective. All topics, observations, and opinions expressed are solely my own.

1. Speculative Decoding: P-EAGLE Goes Live in vLLM 0.16.0

On February 26, 2026, vLLM 0.16.0 shipped with P-EAGLE support — a meaningful milestone for speculative decoding in production inference. The release bundles several related improvements:

- Unified Parallel Drafting for speculative decoding #32887

- Spec decode now works with structured outputs #33374

- Penalty application in Model Runner V2 #33251

What is P-EAGLE?

P-EAGLE (Parallel-Drafting EAGLE) rethinks the drafting step in EAGLE-style speculative decoding. Where EAGLE generates K draft tokens through K sequential forward passes, P-EAGLE collapses them into a single forward pass — cutting the overhead of the draft phase significantly. The result is a throughput lift that comes essentially for free once the checkpoints are in place.

1.36× output tokens/sec on GPT-OSS 120B 1.17× output tokens/sec on Qwen3-Coder 30B

Both models match the acceptance length of autoregressive EAGLE-3 in vLLM benchmarks, with no quality regression.

Resources:

- Preprint: arxiv.org/abs/2602.01469

- HuggingFace checkpoints: amazon/gpt-oss-120b-p-eagle · amazon/Qwen3-Coder-30B-A3B-Instruct-P-EAGLE

2. vLLM SIG: Model Acceleration (Quantization & Speculators)

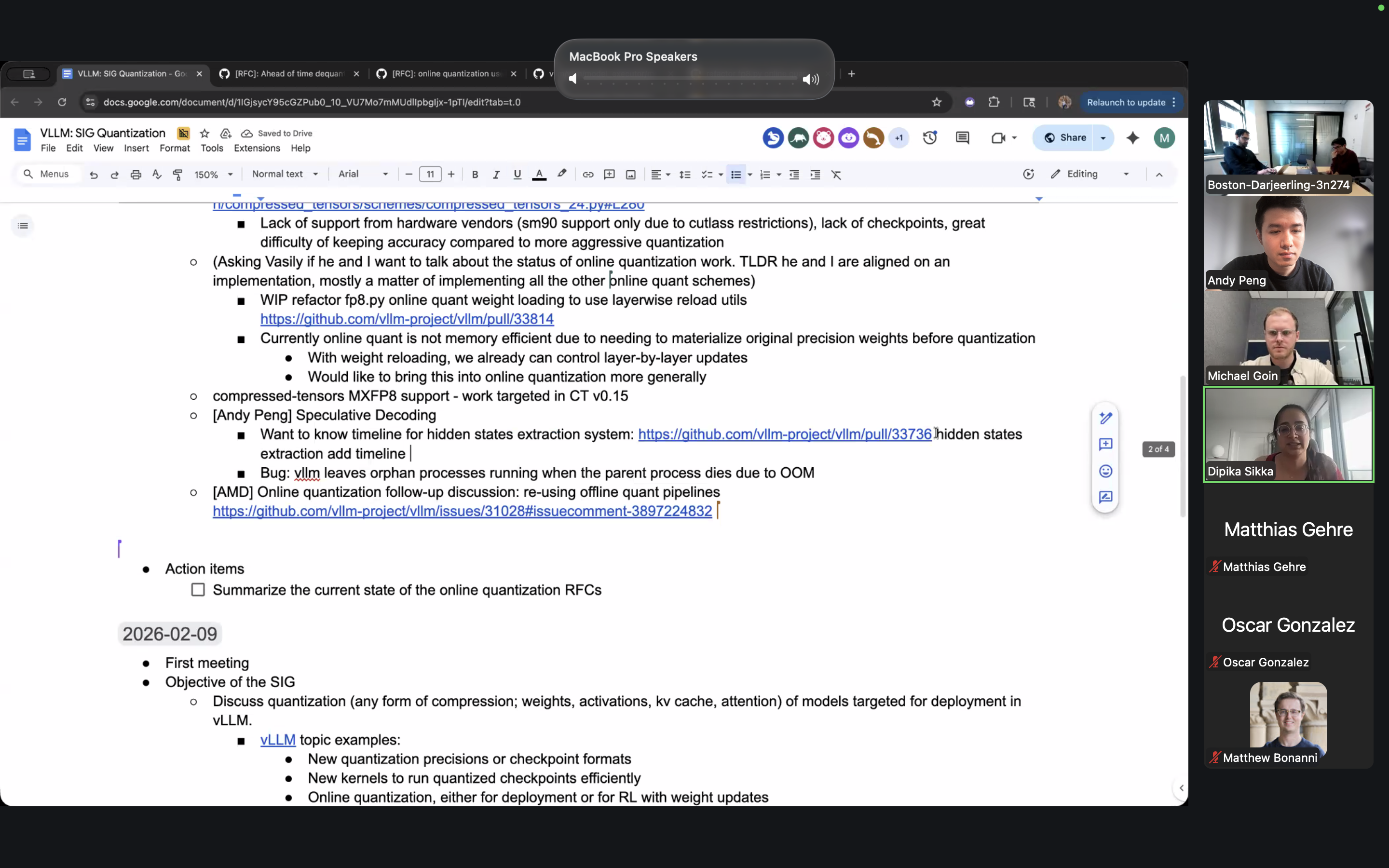

February marked the launch of the Model Acceleration SIG within the vLLM community — a dedicated working group covering quantization and speculative decoding. Two meetings took place this month.

- Discussed the hidden states extraction RFC: #33118 — a prerequisite for enabling EAGLE-style draft heads without model-specific hooks.

- Reported an OOM-handling bug surfaced during testing with Qwen3 30B Coder: #34643.

- Explored the interaction between LoRA adapters and EAGLE draft heads — specifically how LoRA fine-tuning affects acceptance length and what that means for speculative decoding pipelines.

- Discussed feasibility of integrating these patterns upstream in vLLM.

3. Speeding Up Multi-LoRA Serving for MoE Models

Also on February 26, the vLLM team published work on efficient multi-LoRA serving for Mixture-of-Experts models — a problem that grows quickly in complexity as the number of fine-tuned variants scales. The approach targets the specific characteristics of MoE routing to reduce overhead when switching between adapters.

Read more:

- vLLM announcement on X

- vLLM blog: Multi-LoRA Serving

- Amazon Science blog: Efficiently Serve Dozens of Fine-Tuned Models with vLLM on Amazon SageMaker AI and Amazon Bedrock